Introduction

The robotics industry is racing to develop humanoid and intelligent robots capable of natural, human-like movements. A critical bottleneck lies in acquiring high-quality human motion data efficiently and affordably for robot training, algorithm development, and real-time control. Traditional motion capture (mocap) solutions—whether marker-based or inertial—suffer from complex setup, restrictive wearables, or exorbitant costs, limiting their scalability in robotics research and production

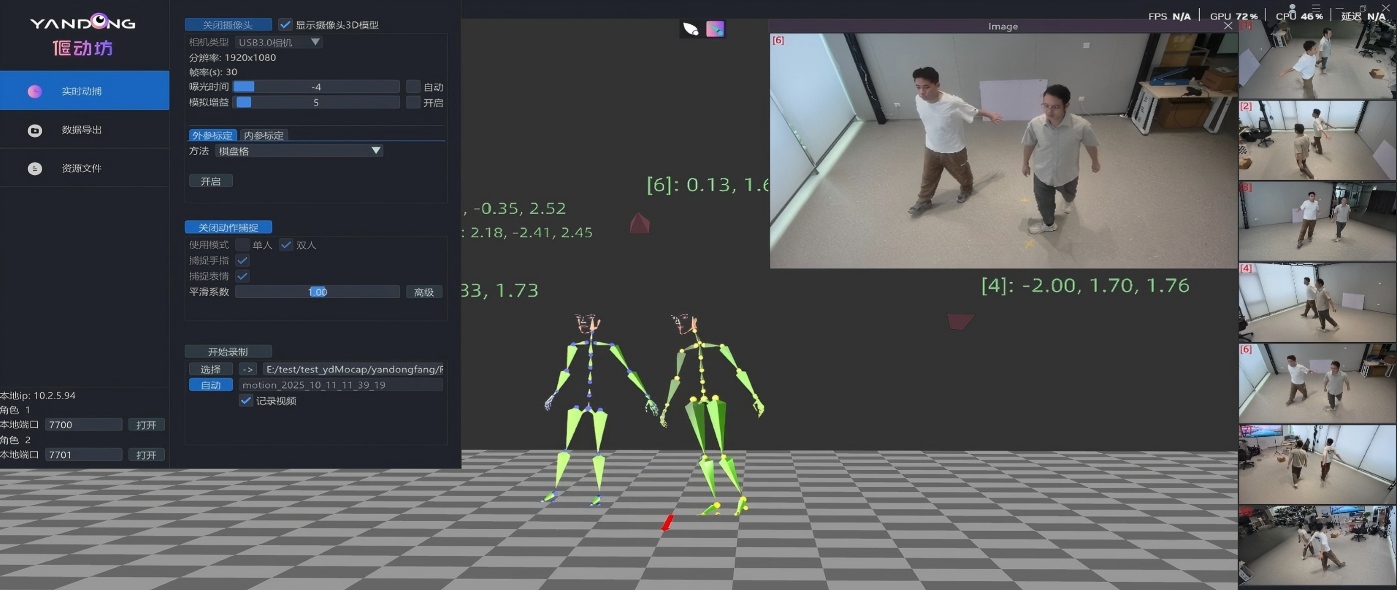

Enter Virdyn YanDong Markerless Motion Capture System—a game-changing, all-in-one solution designed to eliminate these pain points. Combining advanced hardware (YanDong Cameras) and powered software (YanDongFang), this system enables markerless, suitless, helmetless real-time motion capture with professional-grade precision. Tailored for the robotics industry, YanDong empowers engineers, researchers, and developers to effortlessly capture human motion data, accelerate robot learning, and unlock new possibilities in human-robot interaction.

What Is Virdyn YanDong Markerless Motion Capture System?

YanDong is a complete, end-to-end markerless mocap solution built by Virdyn, integrating 7 high-resolution industrial cameras and proprietary YanDongFang software. Its technical workflow—multi-camera video capture → AI human reconstruction → AI automatic bone solving—delivers accurate, real-time 3D motion data without requiring performers to wear any equipment or markers.

Core Hardware & Software Specifications

The YanDong system is engineered for professional performance, with specs optimized for both precision and usability:

- Hardware: 7 × 3.2MP color industrial global shutter cameras (ultra-wide-angle, low-distortion lenses)

- Max Frame Rate: 60fps (smooth, high-speed motion capture)

- Capture Scope: Full-body (21 joints) + facial expressions + finger movements (30 joints)

- Latency: <80ms (real-time data streaming for instant robot feedback)

- Multi-Person Capture: Supports simultaneous tracking of 2 subjects

- Synchronization: Multi-camera video synchronous recording & capture

- Capture Area: 4m × 4m (spacious enough for complex human movements)

- Software Ecosystem: YanDongFang software + plugins for UE/Unity/Maya/iClone/Blender (real-time data integration)

How It Works

- Setup: Deploy 7 YanDong cameras around a 4m×4m capture area; no markers, suits, or helmets required.

- Capture: Perform natural movements—cameras record synchronized video streams at 60fps.

- AI Reconstruction: YanDongFang’s AI algorithms process video to reconstruct 3D human models in real time.

- Bone Solving: Automatic AI bone solving generates precise 3D joint data (21 body + 30 finger joints).

- Data Export: Stream or export motion data to robotics platforms, simulation tools, or 3D software via plugins.

Key Advantages for Robotics Professionals

In an industry where efficiency, cost-effectiveness, and data quality are paramount, YanDong outperforms competitors like The Captury and Move.ai with unique benefits tailored to robotics use cases:

1. Zero Wearables, Zero Markers: Unrestricted Natural Motion

Traditional mocap systems force performers into tight suits or require hours of marker application—restricting movement and introducing data noise. YanDong’s markerless design lets subjects move freely in everyday clothing, capturing authentic, natural human motions critical for training robots to mimic human behavior accurately.

2. Professional-Grade Precision & Real-Time Performance

- High Resolution & Frame Rate: 3.2MP cameras at 60fps ensure crisp capture of fast, complex movements (e.g., dancing, lifting, navigating obstacles).

- Microsecond-Level Synchronization: 7 cameras work in perfect harmony to eliminate motion blur and ensure accurate 3D reconstruction.

- Ultra-Low Latency (<80ms): Real-time data streaming enables instant robot control feedback—critical for teleoperation and dynamic human-robot interaction.

3. Unmatched Cost-Performance: Permanent Licensing, No Annual Fees

A standout advantage over competitors like The Captury ($365/year subscription) and Move.ai (pay-per-use or expensive enterprise plans): YanDong offers a one-time purchase with permanent, unlimited use. No recurring fees, no usage caps—making it ideal for research labs, startups, and enterprises with long-term robotics projects.

4. Seamless Integration with Robotics & Simulation Workflows

YanDong’s ecosystem is built for robotics developers:

- Real-Time Plugins: Directly stream motion data to UE/Unity/Maya/Blender—common tools for robot simulation and animation.

- Standard Data Formats: Export data in CSV/FBX/BVH, compatible with robotics platforms like ROS, Isaac Lab, and MuJoCo.

- Easy Retargeting: Clean, consistent joint data simplifies retargeting human motions to humanoid robot skeletons (e.g., Unitree G1, Atlas).

Applications in the Robotics Industry

YanDong’s versatility makes it indispensable across robotics research, development, and commercialization:

1. Robot Motion Data Collection & Training

- Human-to-Robot Motion Transfer: Capture human movements (walking, running, dancing, manipulating objects) and use the data to train robots via reinforcement learning (RL) or imitation learning.

- Dataset Creation: Build large, high-quality human motion datasets for AI model training—eliminating the need for expensive manual keyframing.

- Dynamic Movement Training: Capture fast, agile motions (e.g., sports, emergency responses) to enhance robot agility and adaptability.

2. Humanoid Robot Development & Testing

- Biomechanical Analysis: Study human joint angles, movement trajectories, and balance to optimize robot mechanical design and control algorithms.

- Gait Optimization: Capture natural human walking/running gaits to refine robot locomotion, improving stability and energy efficiency.

- Facial & Gesture Interaction: Capture facial expressions and hand gestures to enable robots to communicate naturally with humans—critical for service and companion robots.

3. Robot Teleoperation & Remote Control

- Real-Time Teleoperation: Low-latency motion data allows operators to control robots remotely with natural body movements—ideal for dangerous environments (disaster response, industrial inspection).

- Intuitive Human-Robot Interaction: Enable robots to mirror human movements in real time, enhancing collaboration in manufacturing, healthcare, and education.

4. Simulation & Digital Twin Validation

- Sim2Real Transfer: Capture human motions in a controlled environment, validate them in simulation (MuJoCo, RVIZ), and deploy to real robots—reducing deployment risks and costs.

- Digital Twin Calibration: Use precise human motion data to calibrate robot digital twins, ensuring accurate simulation of real-world robot behavior.

Conclusion

The future of robotics hinges on robots’ ability to move and interact like humans—and high-quality motion data is the foundation of this capability. Virdyn YanDong Markerless Motion Capture System removes the barriers that have long plagued robotics developers: complex setup, restrictive wearables, and prohibitive costs.

With its 7 industrial cameras, 60fps precision, full-body+face+finger tracking, ultra-low latency, and permanent licensing, YanDong delivers unmatched value for robotics research, motion training, teleoperation, and simulation. It’s not just a mocap system—it’s a catalyst for innovation, empowering you to build robots that move naturally, interact intuitively, and perform exceptionally.

Ready to transform your robotics motion data workflow? Contact Virdyn today to learn more about YanDong and explore how it can accelerate your robotics projects.

Post time: May-09-2026