Introduction

As humanoid robot technology advances rapidly worldwide, the ability to perform complex, agile, and expressive movements — especially realistic robot dance performances — has become a critical benchmark for evaluating robot motion control, algorithm optimization, and mechanical stability. Behind every smooth and natural robot movement lies a standardized, reliable technical workflow that transforms human artistic motion inspiration into stable, repeatable robot muscle memory.

For universities, research laboratories, and creative studios, building a repeatable pipeline from human motion capture to real robot deployment is essential for robotics teaching, student training, scientific research projects, and commercial robot performance development. Without a professional inertial motion capture system and standardized data processing tools, researchers and engineers often face unstable motion data, messy retargeting results, failed simulation training, and high risks during real robot testing.

In this blog, we will introduce the DreamsCap X1 professional inertial motion capture system and its complete five-step practical workflow, explaining how to accurately capture, correct, optimize, simulate, and finally deploy real human movements onto physical humanoid robots. This mature solution is specially designed for humanoid robot education, laboratory research, and studio motion customization projects.

Why Choose DreamsCap X1 for Humanoid Robot Motion Training & Deployment?

Traditional optical motion capture requires large capture spaces, complicated calibration, and expensive hardware, making it unsuitable for daily university teaching and repeated laboratory experiments. Ordinary low-precision inertial mocap devices suffer from magnetic interference, data jitter, and unstable long-term recording, which cannot meet robot reinforcement learning and high-precision motion training requirements.

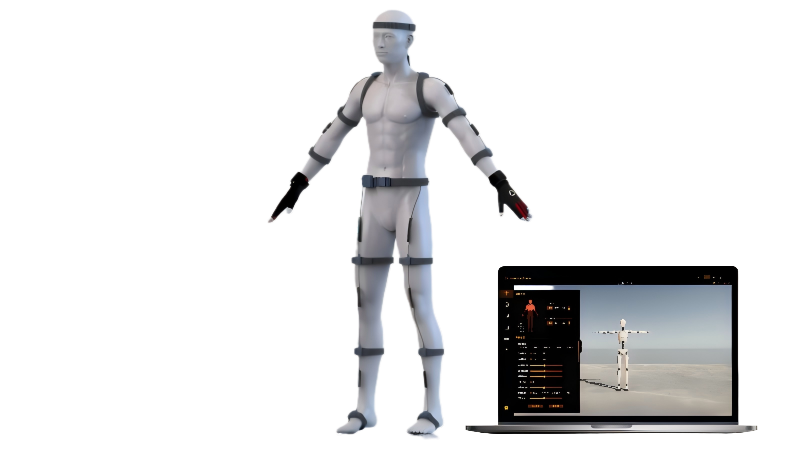

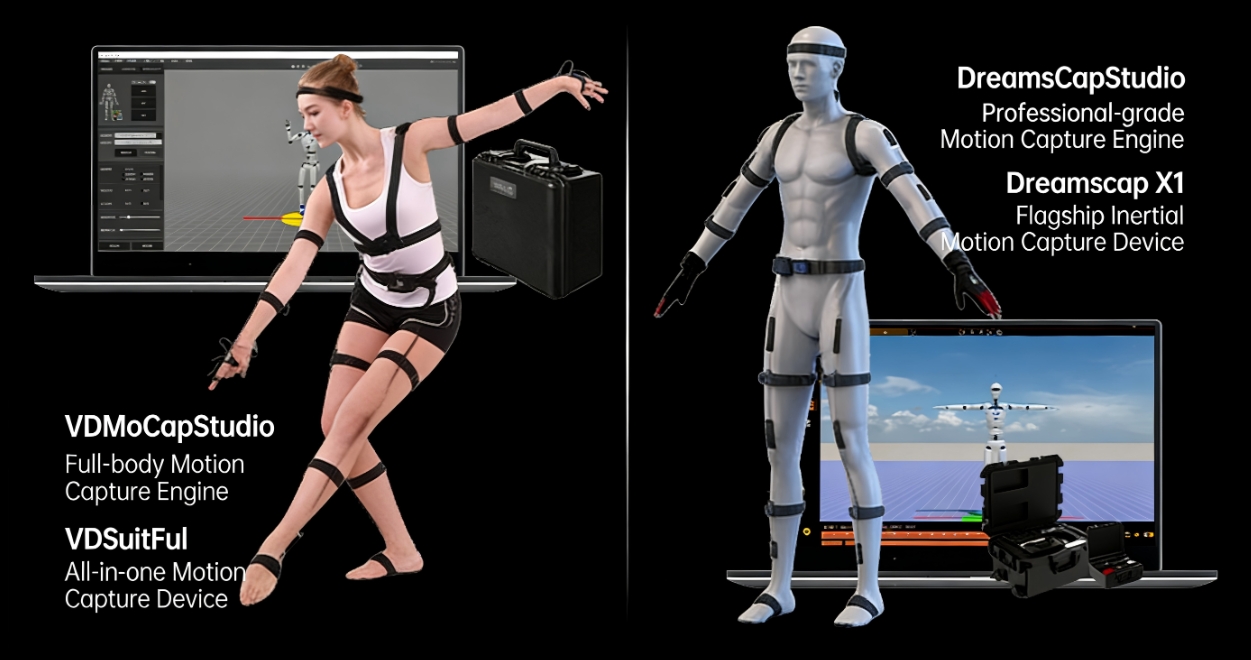

DreamsCap X1-R high-precision anti-magnetic inertial motion capture system is engineered specifically for professional motion research and humanoid robot data collection. Equipped with 31 high-precision body and hand sensor nodes, paired with the professional DreamsCap Studio mocap engine, the system delivers ultra-high accuracy, ultra-low latency, powerful anti-magnetic interference algorithms, and long-term super stable data output. It perfectly matches the high-standard data collection needs of university robot labs, research institutions, and motion creation studios.

For teams with basic budget needs, Virdyn also provides the VDSuit Full entry-level inertial mocap solution with 27 body and hand sensor nodes, meeting conventional teaching and simple robot motion capture tasks. Users can flexibly choose the right mocap hardware according to project difficulty and research requirements.

The 5-Step Standard Workflow: From Human Motion Capture to Real Humanoid Robot Deployment

Step 1: Professional Inertial Motion Capture & Raw Motion Data Recording

The first foundation of robot motion deployment is high-quality raw human motion data. Bad capture data will lead to endless corrections in later stages and even failed robot training.

Professional performers or operators wear either DreamsCap X1-R (31 sensor nodes for full-body and finger ultra-fine capture) or VDSuit Full (27 sensor nodes for conventional motion recording). The matched professional software engine — DreamsCap Studio or VDMocap Studio — is used for real-time motion preview and formal data recording. Relying on built-in multi-layer AHRS sensor fusion algorithms and strong anti-magnetic interference technology, the system effectively avoids data drift, jitter, and signal loss commonly found in ordinary mocap devices.

Whether recording daily basic actions, sports movements, or complex dance choreography, the system stably captures every body posture, joint angle change, and subtle hand movement, completing high-fidelity initial motion data collection and laying a solid foundation for subsequent robot retargeting and reinforcement learning.

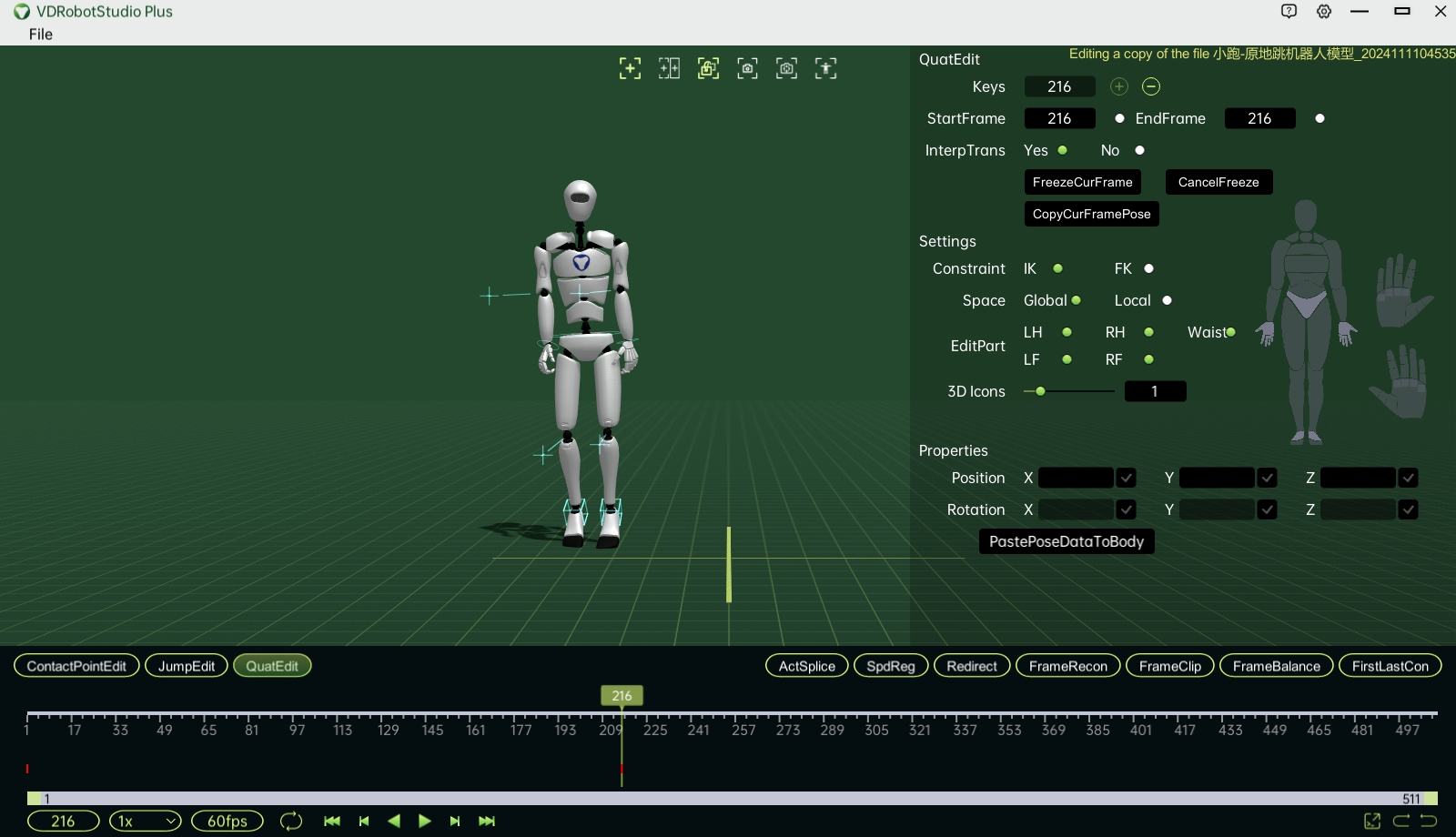

Step 2: Motion Data Refinement & Robot Skeleton Retargeting in VDRobot Studio

Raw motion capture data cannot be directly applied to humanoid robots. Human body joint structures, motion ranges, and movement habits are completely different from robot mechanical structures. Raw data usually has jitter, unreasonable joint angles, and out-of-limit trajectories, which will cause robot shaking, self-collision, or even hardware damage if directly deployed.

Therefore, the second core step is data cleaning and retargeting. Import the recorded original motion data into VDRobot Studio, Virdyn’s self-developed exclusive robot motion data conversion platform. Engineers and researchers can perform precise smoothing, trajectory adjustment, joint angle limitation correction, and motion rhythm fine-tuning in the software.

After data optimization, the standardized motion data is retargeted to the exclusive humanoid robot skeleton (such as Unitree G1 and other mainstream robot models). Finally, export standard robot motion datasets in CSV (LaFAN format), which is fully compatible with various robot simulation training environments and reinforcement learning platforms.

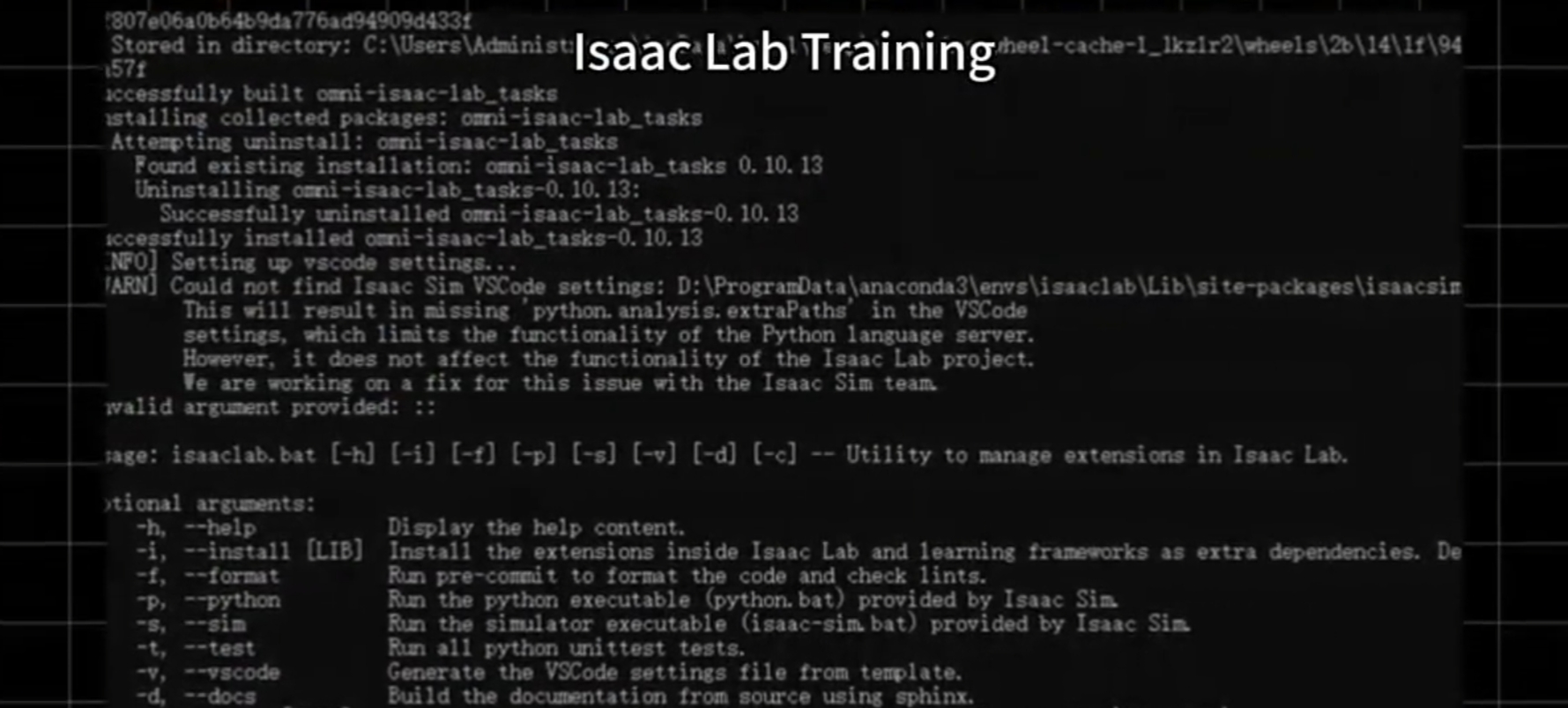

Step 3: AI Reinforcement Learning Training in Isaac Lab for Motion Optimization

The corrected and retargeted motion data is only the initial motion strategy for the robot. Human movement logic cannot fully adapt to the robot’s mechanical characteristics, weight distribution, balance algorithm, and joint response speed.

In the third step, import the optimized motion datasets into Isaac Lab for large-scale reinforcement learning training. Through millions of iterative learning calculations, the robot continuously adjusts motion details, optimizes balance control, and adapts its mechanical limits. Finally, the robot learns the most suitable and natural movement logic that matches its own hardware conditions, achieving both smooth and stable motion performance, especially suitable for complex robot dance and continuous movement tasks.

Step 4: Sim2Sim Secondary Simulation Verification in MuJoCo & RVIZ

To avoid simulation-to-reality gaps and reduce the risk of hardware damage to physical robots, Sim2Sim secondary verification is an essential link in the entire workflow.

After reinforcement learning in Isaac Lab, the motion strategy and data packages are imported into mainstream simulation platforms such as MuJoCo and RVIZ for repeated simulation rehearsal and motion debugging. This step further optimizes motion stability, eliminates abnormal actions, verifies rationality under physical engine conditions, and greatly reduces the debugging time and hardware loss of subsequent real machine deployment.

Step 5: Final Deployment & Real-World Humanoid Robot Execution

After double verification of data correction, AI reinforcement learning, and secondary simulation, the final stable motion strategy is officially deployed to the physical humanoid robot. With only a small amount of on-site fine-tuning and parameter adjustment, the robot can stably reproduce smooth, natural, and highly simulated human movements.

Whether for laboratory scientific research verification, university teaching demonstrations, stage robot dance performances, or intelligent scene display performances, the robot can get rid of rigid and stiff preset actions and present stable, coherent, and expressive motion effects.

Powerful Additional Resources to Accelerate Robot Training & Reduce Costs

To help university laboratories and research teams quickly carry out robot motion research and project development, Virdyn provides rich supporting resources:

140+ Pre-Corrected Professional Motion Datasets: Covering basic daily movements, sports actions, and various dance motions, helping robots learn quickly and greatly shortening training cycles and research costs.

Complete Simulation Engineering Packages, SDK and Source Code Support: Compatible with mainstream simulation software and multiple humanoid robot models, allowing engineers and students to quickly build reinforcement learning projects and accelerate real machine deployment progress.

Conclusion

Humanoid robot motion deployment is no longer a complex and threshold-high research project relying solely on complex algorithms and repeated debugging. With the DreamsCap X1 professional inertial motion capture system and Virdyn’s one-stop full-process solution including VDRobot Studio data retargeting, Isaac Lab reinforcement learning, MuJoCo simulation verification, and final real machine deployment, universities, laboratories, and studios can easily complete the whole process from human motion collection to robot stable execution.

This standardized five-step workflow not only reduces research difficulty and project costs but also provides reliable, repeatable, and teachable technical support for humanoid robot motion control teaching and scientific research. If you are looking for a stable, efficient, and easy-to-deploy mocap and robot motion training solution, DreamsCap X1 is your ideal choice.

Post time: Apr-28-2026