The era of embodied AI and humanoid robots is here. With platforms like the Unitree G1 pushing the boundaries of hardware capabilities, robotics engineers face a new, complex challenge: How do we teach these machines to move with human-like agility and grace?

Traditional hard-coded control systems are often too rigid for complex, dynamic tasks. The modern solution lies in Imitation Learning—capturing real human motion and transferring it to the robot. However, mapping human motion directly to a robotic skeleton is not a plug-and-play process. It requires a robust pipeline spanning high-fidelity data collection, kinematic retargeting, and physics-based reinforcement learning.

In this guide, we will break down the end-to-end pipeline for deploying human motion to intelligent robots, highlighting the critical roles of VDSuit Full, VDRobot Studio, and advanced open-source RL frameworks like BeyondMimic.

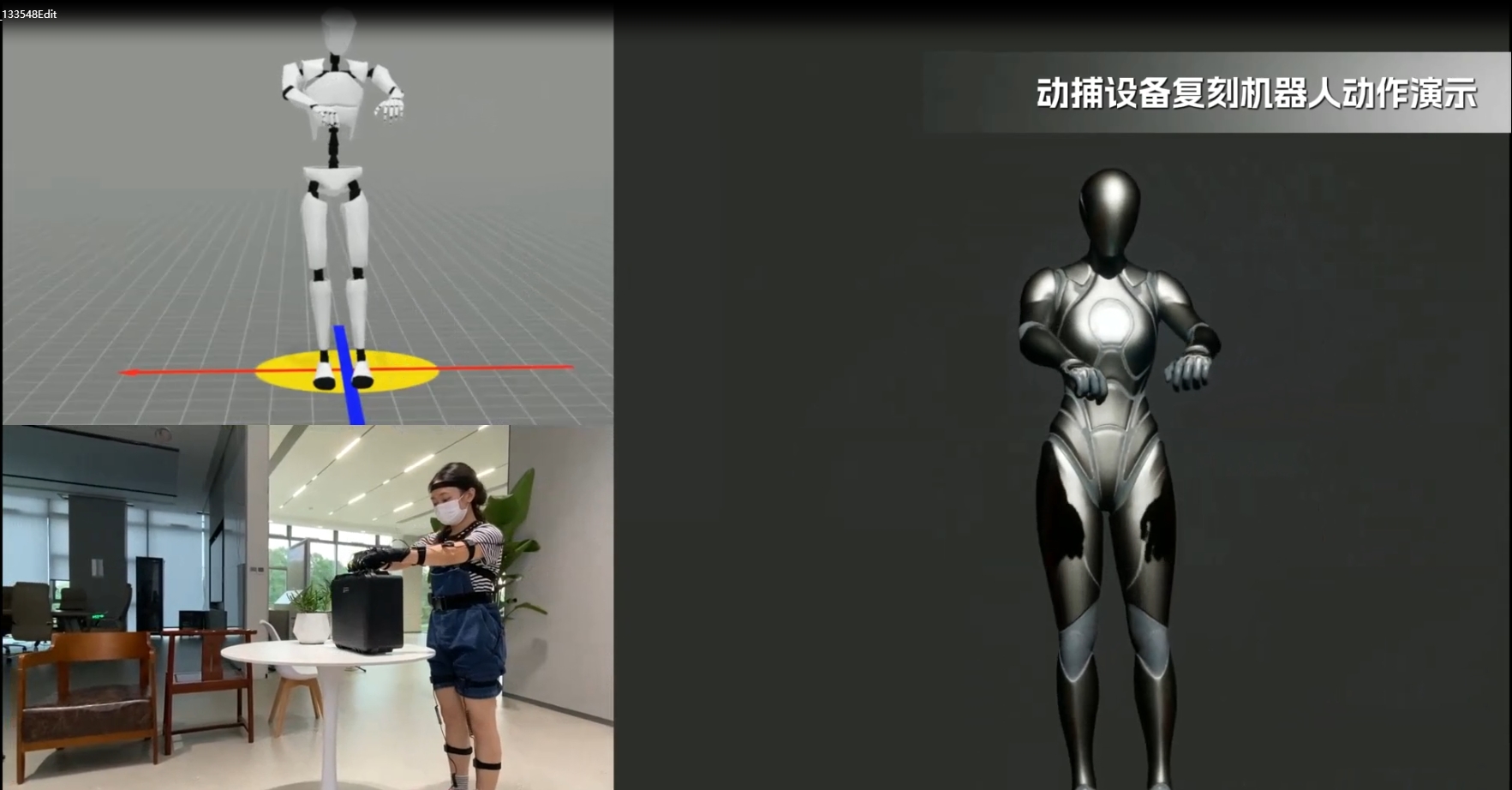

Step 1: High-Fidelity Data Collection with VDSuit Full

The foundation of any successful imitation learning model is the quality of the input data. If the initial motion capture (mocap) data is noisy or inaccurate, the resulting robotic policy will fail.

This is where the VDSuit Full comes into play. Designed for professional-grade motion capture, the VDSuit Full provides the highly accurate, full-body kinematic data required for embodied AI research.

Why high-quality mocap matters for robotics:

- Precision Tracking: VDSuit Full captures nuanced human movements, including complex joint rotations and rapid dynamic shifts, ensuring no critical motion data is lost.

- Low Latency & High Frame Rate: Essential for capturing the fast-twitch movements required for dynamic tasks like running, jumping, or recovering from a stumble.

- Embodied AI Ready: By providing clean, continuous spatial data, the VDSuit Full drastically reduces the time engineers spend cleaning up jittery or broken data frames.

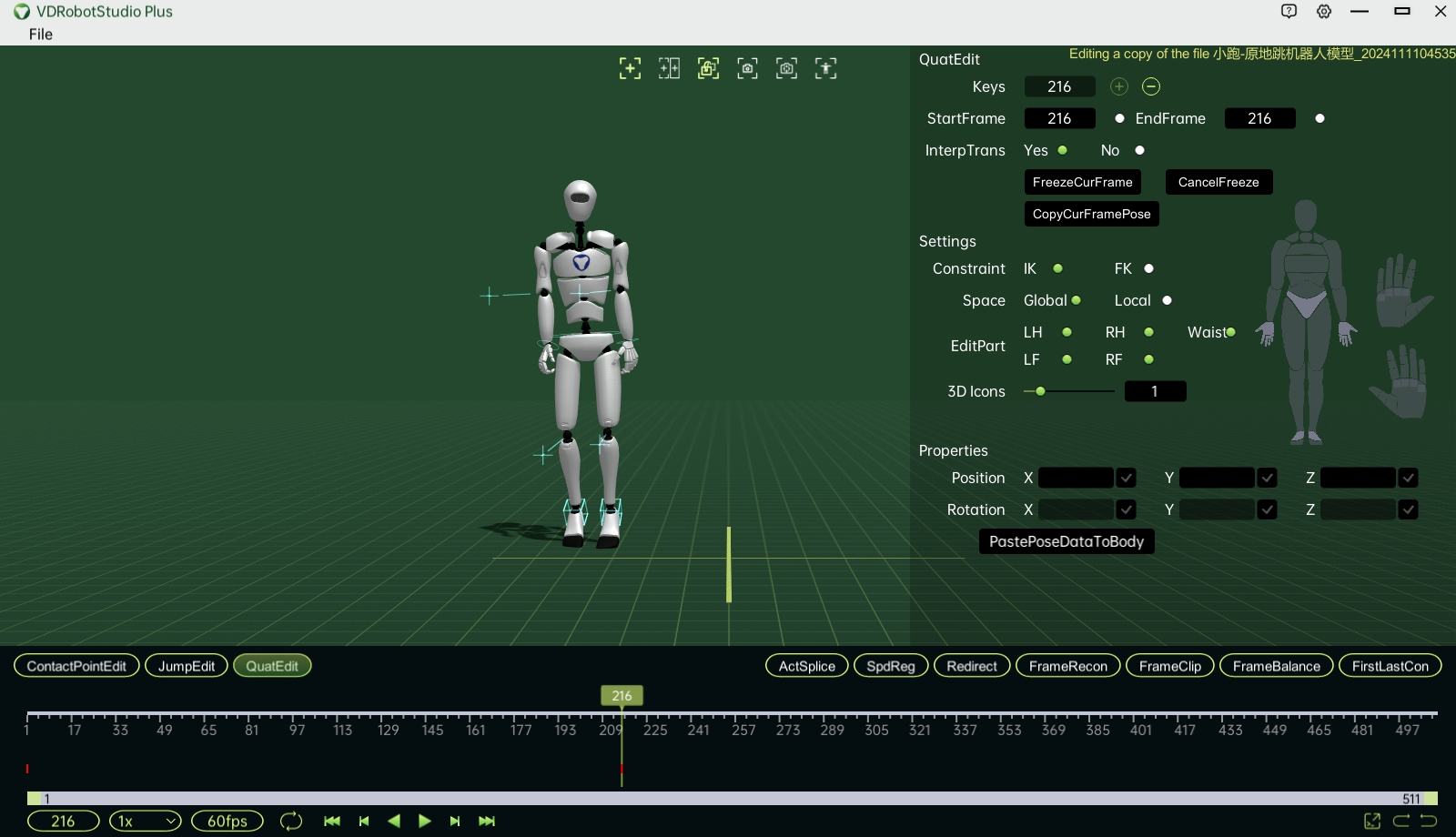

Step 2: Kinematic Retargeting with VDRobot Studio

Once you have captured the human motion, you face the Kinematic Gap. A human skeleton has different proportions, joint limits, and degrees of freedom (DoF) compared to a robotic URDF (Unified Robot Description Format).

If you send raw human joint angles to a robot, the physical discrepancies will cause severe errors. VDRobot Studio is the bridge that solves this problem.

As a dedicated motion data correction and retargeting software, VDRobot Studio allows engineers to seamlessly map human mocap data onto specific robotic skeletons (such as the Unitree G1).

Key capabilities of VDRobot Studio in the pipeline:

- Accurate Skeleton Mapping: Intelligently translates human joint rotations into the specific actuator commands required by the target robot’s URDF.

- Joint Limit Enforcement: Automatically adjusts the motion data to ensure it does not exceed the mechanical limits of the robot’s motors, preventing hardware damage.

- Data Export for Simulation: VDRobot Studio exports the cleaned, retargeted kinematic trajectories into standard arrays (e.g.,

.npyor.bvh), perfectly formatted for ingestion into physics simulators.

Step 3: Bridging the Reality Gap with BeyondMimic (Physics & RL)

At this stage, VDRobot Studio has provided the perfect kinematic trajectory. However, if you deploy this pure geometric data directly to a real robot, it will fall over. Why? Because pure kinematics ignores dynamics—gravity, momentum, friction, and motor torque.

To achieve dynamic balance, the retargeted data must be processed through a physics simulator (like NVIDIA Isaac Sim) using Reinforcement Learning (RL). This is where open-source frameworks like BeyondMimic excel.

How BeyondMimic transforms kinematics into dynamic control:

- Imitation Learning: BeyondMimic ingests the retargeted data from VDRobot Studio. Inside the physics engine, an AI policy is trained to “mimic” this data while simultaneously learning to maintain balance against virtual gravity.

- Guided Diffusion & Zero-Shot Control: BeyondMimic doesn’t just memorize animations; it extracts motion primitives. This allows engineers to use guided diffusion to command the robot in real-time (e.g., via joystick) to perform complex tasks without retraining the neural network.

- Domain Randomization: To prepare for the real world, the simulation introduces random variations in mass, friction, and motor latency. This ensures the trained policy is robust enough to survive the Sim-to-Real transfer.

Step 4: Hardware Deployment (Sim-to-Real)

The final step is deploying the trained RL policy onto the physical hardware.

Because the VDSuit Full provided pristine initial data, VDRobot Studio ensured perfect anatomical mapping, and BeyondMimic handled the dynamic physics, the resulting neural network (often exported as an ONNX model) is highly robust.

Using the robot’s native SDK (e.g., Unitree SDK2), the onboard computer runs the neural network at a high frequency (e.g., 50-100Hz). The policy reads real-time IMU and joint states, and outputs target positions to the low-level motor controllers, resulting in fluid, human-like robotic movement in the real world.

Conclusion: A Complete Ecosystem for Embodied AI

Deploying human motion to humanoid robots is no longer science fiction, but it requires a strict, professional pipeline.

You cannot achieve advanced Sim-to-Real deployment without flawless initial data. By utilizing the VDSuit Full for premium motion capture and VDRobot Studio for precise kinematic retargeting, robotics engineers can feed the highest quality data into RL frameworks like BeyondMimic.

This combination of proprietary hardware/software and open-source AI frameworks is the key to unlocking the true potential of embodied intelligence.

Ready to accelerate your humanoid robotics research?

Discover how the [VDSuit Full] and [VDRobot Studio] can streamline your Imitation Learning pipeline today. (Insert links to your product pages here)

Post time: May-15-2026